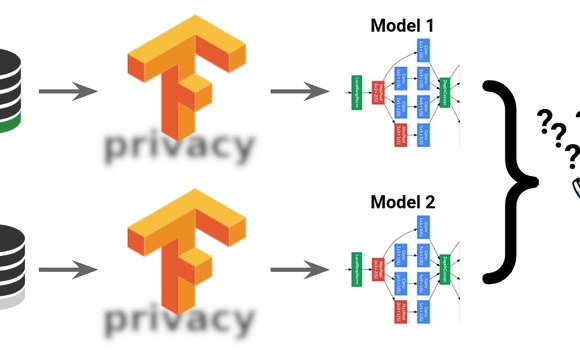

(On the other hand, the private model’s utility might still be fine, even if it failed to capture some esoteric, unique details in the training data.) However, if the slight differences between the two models were due to a failure to capture some essential, core aspects of the language distribution, this would cast doubt on the utility of the differentially-private model. We train two models - one in the standard manner and one with differential privacy - using the same model architecture, based on example code from the TensorFlow Privacy GitHub repository.īoth of the models do well on modeling the English language in financial news articles from the standard Penn Treebank training dataset. Language modeling using neural networks is an essential deep learning task, used in innumerable applications, many of which are based on training with sensitive data. Instead, to train models that protect privacy for their training data, it is often sufficient for you to make some simple code changes and tune the hyperparameters relevant to privacy.Īn example: learning a language with privacyAs a concrete example of differentially-private training, let us consider the training of character-level, recurrent language models on text sequences. To use TensorFlow Privacy, no expertise in privacy or its underlying mathematics should be required: those using standard TensorFlow mechanisms should not have to change their model architectures, training procedures, or processes. One result of our efforts is today’s announcement of TensorFlow Privacy and the updated technical whitepaper describing its privacy mechanisms in more detail. Last year, Google published its Responsible AI Practices, detailing our recommended practices for the responsible development of machine learning systems and products even before this publication, we have been working hard to make it easy for external developers to apply such practices in their own products. Especially for deep learning, the additional guarantees can usefully strengthen the protections offered by other privacy techniques, whether established ones, such as thresholding and data elision, or new ones, like TensorFlow Federated learning.įor several years, Google has spearheaded both foundational research on differential privacy as well as the development of practical differential-privacy mechanisms (see for example here and here), with a recent focus on machine learning applications (see this, that, or this research paper). In particular, when training on users’ data, those techniques offer strong mathematical guarantees that models do not learn or remember the details about any specific user. To ensure this, and to give strong privacy guarantees when the training data is sensitive, it is possible to use techniques based on the theory of differential privacy.

Ideally, the parameters of trained machine-learning models should encode general patterns rather than facts about specific training examples. Modern machine learning is increasingly applied to create amazing new technologies and user experiences, many of which involve training machines to learn responsibly from sensitive data, such as personal photos or email. Today, we’re excited to announce TensorFlow Privacy ( GitHub), an open source library that makes it easier not only for developers to train machine-learning models with privacy, but also for researchers to advance the state of the art in machine learning with strong privacy guarantees. Posted by Carey Radebaugh (Product Manager) and Ulfar Erlingsson (Research Scientist)

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed